Introduction to API Management

Microsoft acquired a company named Apiphany last year (read about the acquisition) and jumped to the API Management market. So what is API Management ? Given below is the definition what Google gives for the question; indeed it’s a fairly well descriptive definition.

Microsoft Azure API Management is backed by the compute and the storage of Microsoft Azure. Rest of the post explains how to get started with the API Management.

Microsoft Azure API Management is backed by the compute and the storage of Microsoft Azure. Rest of the post explains how to get started with the API Management.

Getting Started with Microsoft Azure API Management

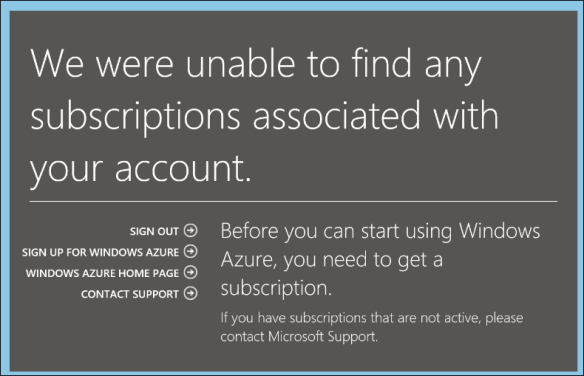

Login to the Microsoft Azure portal, go to API Management and create a API Management service. In the first screen of the wizard you have to specify the URL, select the subscription (if you have more than one) and the region.

In the next screen you enter your organization name and the administration email. (you can simply enter your personal email here it doesn’t need to be the one with your organization domain specific one. I used my hotmail id).

In this screen you can select for the advance settings, which opens the third wizard panel. There you can select the tier. There are two tiers available; Developer and Standard. Default selection is Developer tier.

See the difference between the tiers : http://azure.microsoft.com/en-us/pricing/details/api-management/

Now the API Management service has been provisioned.

Creating APIs

Click on the arrow icon and get inside the service, then click on the Management Console. By default when you create a Azure API Management service it creates a sample API known as Echo API and sample Product. I deleted all the auto generated default APIs and Products and this article walk you through from the scratch.

API Management requires a back end service, which is our real web service we want to expose via API Management to developers. I created a simple REST service using Web API and hosted it in the Azure Websites. The URL http: //nebulacustomers.azurewebsites.net/

With those information we can now start using Azure API Management. First we have to create a API. In the management console click on APIs and create one.

Enter the name of the API and the web service URL. Web API URL suffix is a URL suffix which is to group and categorize the service endpoint as you create many APIs. It is optional but good to have because that will make your life easier as your number of APIs grows. By default HTTPS is selected.

Adding Operations

Technically speaking operations are trigger points of the web service in the API Management. Click on the API we created (Nebula Customers) and select the Operations tab and click on ADD Operation.

Here we can create operations and point them to our backend web service. Many operations can point to a single endpoint in our backend service. In my backend service I have only two endpoints.

http: //nebulacustomers.azurewebsites.net/api/customers – List of customers

http: //nebulacustomers.azurewebsites.net/api/customers?name=<name> – Gets the specified customer object.

We create 3 operations, two of them will point to the first endpoint and the last one will point to the endpoint with the name parameter.

Create three operations as follows.

Operation to list the customers

- HTTP Verb – GET

- URL Template – /customers

- Rewrite URL Template – /api/customers

- Display Name – List of customers

Operation for the cached customers

- HTTP Verb – GET

- URL Template – /cachedcustomers

- Rewrite URL Template – /api/customers

- Display Name – List of customers

- And go to Cache tab and check the Enable.

Note that above 2 operations are pointing to the same endpoint in our backend service as the rewrite the URL templates are same. Here caching is done by the API Management and our backend service isn’t aware of it.

Third operation to get the customer with the specified name.

- HTTP Verb – GET

- URL Template – /customers/{name}

- Rewrite URL Template – /api/customers?name={name}

- Display Name – Get the customer by name

After adding all three operations you will have a similar screen like this.

Creating Products

Now we have our API and operations, in order to expose the API to developers as packed module we should create a Product and associate the API to it. One Product can have many APIs. Developers who subscribe to a Product get access to the APIs associated with the Product.

Go to Product tab and create new Product.

In this screen check the “Require subscription approval” if you need to get email requests for approving the subscription requests. You have to configure this email address in the notification section. Second checkbox “Allow multiple simultaneous subscriptions” allows the developers to create more than one subscription for the product. Each subscription is identified by a unique key and in this option you also can specify the maximum number of simultaneous subscriptions.

In this screen check the “Require subscription approval” if you need to get email requests for approving the subscription requests. You have to configure this email address in the notification section. Second checkbox “Allow multiple simultaneous subscriptions” allows the developers to create more than one subscription for the product. Each subscription is identified by a unique key and in this option you also can specify the maximum number of simultaneous subscriptions.

After creating the Product click to open that and associate the APIs to the Product. Click in ADD API to Product.

Go to Visibility tab in the product section and check Developers. Developers need to authenticated themselves in the Developer Portal, subscribe to Products and obtain subscription keys in order to use the API. Guests are unauthenticated users who allowed to view the APIs and operations but not to call them. Administrators are the people who create and manage APIs, Operations and Products.

After enabling the visibility to developers Publish the product in order to make it available in the Developer Portal.

Developer Portal

Now the API is built and published, now it’s the developers work to deal with the Developer Portal and subscribe to the product. Click on the Developer Portal link in on the right hand top corner. When you’re working as the administrator and click on the Developer portal you are logged into the Developer portal as administrator.

The above is the default view of the Developer Portal. You can do branding on the portal if required.

Go to Products and you can see the Product we created and as an administrator you’re already subscribed to this Product. So click on the APIS tab click on the specific API.

Click in the List of Customers operation and click on the Open Console in order to check test the service.

Click on th HTTP GET and invoke the service. The above URL is the full URL with the subscription key. Response comes in JSON (as this is the default of my backend service).

Now invoke the List of cached customers and check the response time.

First call it took 402ms.

Second call took only 15ms.

Similarly invoke the Get customer by name specifying a parameter. The coolest part of the Developer Portal is that it’s really helpful for the developers to test the endpoints and also it generates the code in may languages on how to consume those endpoints. Below is the code generated in Objective C for consuming the customer name endpoint.

Conclusion for the Introduction

Now our API Management service is working perfectly. We can control the input and output of the service in more granular ways using policies. We can configure notifications, customize email templates, security, assigning different identity management of developers and much more. These things I will cover in the API Management Advanced tutorial on another blog post.

If you want to try the exact demo I’ve explained here you need the exact backend service. You can download it here. (requires Visual Studio 2013)

Note the version shows 11.0.9229.2, considering the major version number (in this case 11) we know that this SQL Azure Server is V11.

Note the version shows 11.0.9229.2, considering the major version number (in this case 11) we know that this SQL Azure Server is V11.