Storage is one critical component in the Enterprise world. Managing data and its life cycle is a crucial element in many aspects, such as optimizing storage usage, managing cost, adhering to the compliance & archival requirements, security and etc.

Primarily data is stored in database systems (relational and non-relational sources) and as files (includes data lake and blobs), addition to that, data resides in other systems like email servers, document systems, file shares, event and messaging pipes, logs, caching systems and etc.

Laying out a comprehensive data strategy for an organization is a complex process. However, in most cases the data lands in a flat storage as the final tail grade destination. So managing the storage and life cycle management is an important task.

Let’s consider a simple backup storage scenario.

A relational data source assume a SQL Server VM, has following backup requirement.

| Frequency | Backup Type | # backups | Access Frequency |

| 4 hours | Incremental | 42 | Medium |

| Daily | Full | 30 | High |

| Weekly | Full | 12 | High |

| Monthly | Full | 12 | Low |

| Semi-Annual | Full | 6 | Very Low |

| Year | Full | 8 | Very Low |

At any given time (assuming a complete 8 years span) there should be 110 backups maintained. Those 110 backups, should be kept in the right storage based on the access frequency and retention period.

Azure Storage provides access tiers which helps us to determine and auto manage the storage requirements. Azure storage (storage generation v2) let us define life cycle policies at blob level.

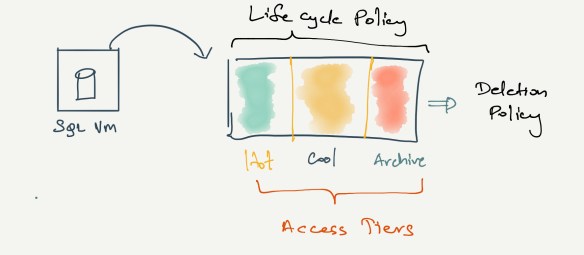

The below diagram depicts this

As shown in illustration, there are three access tiers, hot, cool and archive. Hot and Cool access tiers can be set at the storage account level, and archive tier is set at the individual blob level.

We can define life cycle policies, where the blob movement between tiers from hot to archive and all the way to deletion can be automated to match our requirements.

Sample life cycle policy of a blob.

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

| { | |

| "rules": [ | |

| "enabled": true, | |

| "name": "yearly backup rule", | |

| "type": "Lifecycle", | |

| "definition": { | |

| "actions": { | |

| "baseBlob": { | |

| "tierToCool": { | |

| "daysAfterModificationGreaterThan": 30 | |

| }, | |

| "tierToArchive": { | |

| "daysAfterModificationGreaterThan": 60 | |

| }, | |

| "delete": { | |

| "daysAfterModificationGreaterThan": 370 | |

| } | |

| } | |

| }, | |

| "filters": { | |

| "blobTypes": [ | |

| "blockBlob" | |

| ], | |

| "prefixMatch": [ | |

| "backups/annual" | |

| ] | |

| } | |

| } | |

| } | |

| ] | |

| } |

You can see, under the filters section, we can specify the path, where the rule should be applied. In this way we can have more than one rule for a storage account addressing different paths.

Out of different options in the Azure storage, we should have a standard general purpose V2 storage, in order to get the access tier capability. Standard blob also has the access tier capability. Standard storage is powered by magnetic disks.

Whereas, Premium storage is powered by SSDs but does not offer access tier. Premium storage is intended for the page blobs, like virtual machine disks. Addition to the page blobs, we can use premium storage as blob storage and file shares.

At summary this is the high level view of the available options in Azure Storage.