It is all over the Internet that Elon Musk is on a mission to buy Twitter. Internet is going crazy about this. Part of it because Elon is doing it, anything Elon is involved get augmented on the Internet for some reason. I thought about what would be the case if Elon is successful this deal and how it would change Twitter.

I ain’t a fanatic follower of Elon. If I have to say one thing I like about Elon, it is his ability to gather great engineering teams and relentlessly push to achieve the great things. I have listed here few things, I would love to see in Twitter, hoping Elon would do these.

Inherent authenticity should be strengthened with Web 3 features

Twitter was started as a micro-blogging platform but quickly earned its place for legitimacy. When someone tweets, it is often considered as a source of statement. Tweets cannot be edited, this character gives a strong essence of authenticity to Twitter. Twitter can augment this with the use of Web3 technologies. Web3 is interpreted in many ways, but decentralized and verifiable characteristic is the focus here. Tweets can be stored / backed by a verifiable cryptographic platform, which will increase authenticity beyond a single entity [Twitter itself] controlling it.

Bots should be regulated.

Twitter is infested with bots. Getting rid all the bots is not easy, it also has implications to some existing platforms and business models. Having right checks and balances and regulating the bots is the possible successful path. Validating the purpose of a bot, allowing bots to have limited reach and allowing them to earn the trust to continuously expand their reach, identifying bots with a different flag, identifying and validating the real entities behind the bots, etc. are few of the many ways to regulate the bots.

New Business Models

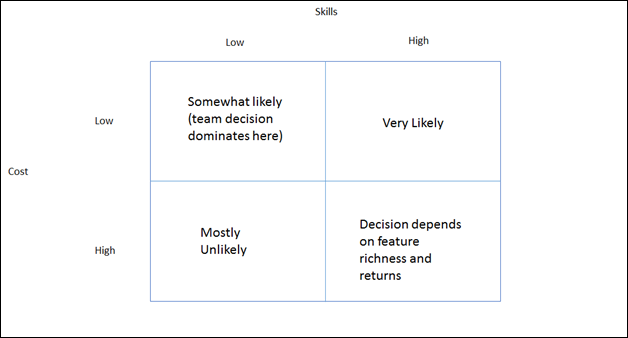

Twitter has a weak revenue compared to other social media platforms. Major social media platforms are focused on content economy. Twitter does not have a content based economic model, so it is almost impossible for Twitter to get substantial revenue from content advertisements.

But Twitter is known for its legitimacy; Twitter should validate all entities behind each every Twitter account. This is easy considering the advancements in AI. If you feel I’m talking too dreamy, consider Uber does this with a greater success rate. When the accounts are verified, it opens several opportunities.

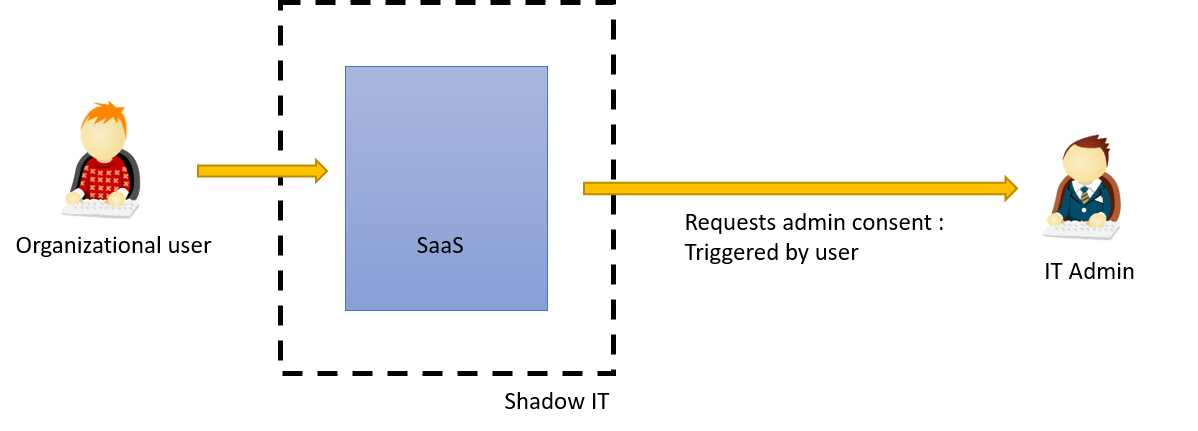

- Twitter becomes the only verified IAM system at such scale on the Internet, verified and established with Web3 technologies. (Panic button ON for FB and Apple ID). When an identity is verified, many services can leverage it.

- Combining this globally verifiable IAM model with metaverse elements like verified metaverse beings opens many other business models in metaverse. A digital twin of a verified person can hold a verified presence in metaverse.

- Subscription charges for certain Twitter accounts. But this should be a flat small fee to keep the power balanced and not coupled with any promotional benefits.

- Twitter is already testing few features of Web3. Each tweet of a verified account (as we already discussed how all accounts can be verified) linked with a decentralized platform will automatically become an NFT.

Putting it all together – Say you have a Twitter account, you have your username and password. First thing, Twitter would allow you to verify yourself by submitting with the required information. Then you become a verified user (this does not mean you have to be celebrity) Once verified, Twitter can issue you a verifiable Decentralized Identifier (DID). These identities will become a platform neutral (Hopefully this can be achieved with liberal and innovative culture Elon would bring in) decentralized identities for the users. Now, users can navigate across other digital platforms including many metaverse options, with these verified decentralized identities. This will enable users to create authentic digital presence. Digital service providers benefit from linking the authentic digital beings with the real humans enriching their services. At the heart of this, users will have the full control over their identities and they can decide whether to trust or not trust their digital interactions. If this verifiable decentralized identities become a reality then even future elections will use the Twitter backed (but not controlled) decentralized identities.

Once Twitter was one of the revolutionary and very forward-thinking company. Vine and Periscope were good examples of this. However, they are also good examples of how such forward thinking ideas could end up in garbage without a strong visionary leadership. Elon has the greater talent in building great engineering teams. Personally, I like Twitter very much and love to see its future with Elon.