Some of you might have noticed that in the project context menu, Visual Studio 2015 gives the option ‘Configure Azure AD Authentication‘

When you click on this, a wizard will open up and it will do most of the heavy lifting for you in configuring the Azure AD for your application. Though the wizard is very rich, it is somewhat flexible as well. In this post I walk you through the direct way of configuring Azure AD Authentication in your application and explain the code generated by the wizard. Read this article to know more about Azure AD authentication scenarios.

First you need an Azure AD domain in order to register your application. You should have this and the user who is going to perform this action should be in the Global Administrator roles for the Azure AD

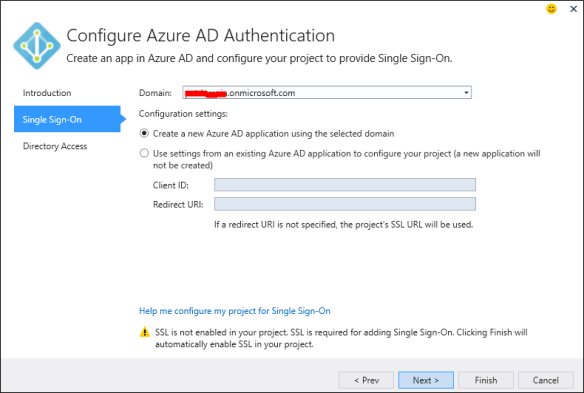

Assuming that you have the prerequisites to configure the authentication, click the above option.

Step 0

Step 1

- Enter your Azure AD domain

- Choose Create a new Azure AD application using the selected domain.

- If you already have an application configured in you Azure AD you can enter the client ID and the Redirect URI of the application.

Step 2

- Tick the Read Directory data option to read the Azure AD

- You can use the properties you read as claims in your application

- Click Finish

This is will take few minutes and configure the Azure AD Authentication for your application. Also it adds the [Authorize] attributes to your controllers.

Now if you log into the Azure Portal and in the Applications section of the Azure AD you can notice the new application provisioned.

Note that the wizard provisions the app as a single tenant application for you, if you want later you can change that to a multi-tenant application.

Configuring the single tenant application / multi-tenant application is beyond the scope of this post.

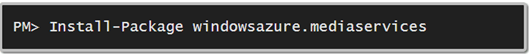

Code

The wizard retrieves the information about the app it created, stores them in the configuration file and write code to access them. Main part is in the App_Start folder of the web project the Startup.Auth.cs

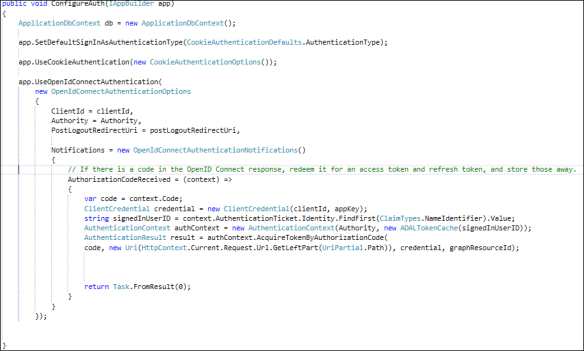

The below image shows the code generated by the wizard on a web project which hasn’t had any authentication configured before.

- First line creates the data context object of the EF model provisioned. This is not mandatory if you are not accessing any data from the database. You can simply delete this line.

- Second line sets the DefaultSignInAsAuthenticationType property – this will be passed to Azure AD during authentication

- Third line sets the cookie authentication for the web application.

- Fourth line of code which looks big and confusing but actually not. It is a common method of OWIN, commonly used in Open Id authentications.

- Here we specify the options for the Azure AD Open Id authentication and the call backs.

- Since the above code is bit unclear I have broken the code in a simpler way.

I have simplified the code to the level of retrieving the authentication code. The wizard generated code goes to next level and retrieves the authentication token as well. I haven’t included this in the code because I wanted to simplify the logic as much as possible. After retrieving the authentication code you can request an authentication token and perform actions. This is a separate topic and includes the authentication workflow of Azure AD.

I will discuss Azure AD authentication workflow and how to customize applications in a separate post.