Introduction to the Azure Elastic Pools and Elastic Jobs

Azure Elastic Database Pool is a service which helps to share the database resources (DTUs) and the management of many databases easy. This is the tool, for solutions which follow the dedicated database tenant isolation strategy. Because apart from the resource elasticity between the database Elastic Pools have Elastic Jobs which is a separate configuration, which allows to manage the databases from a single interface.

Having different databases for different tenants or for any other isolations, comes with the concern of a shared index of those databases. Application get to know which database to connect from this shared index. Often this index is known as master database.

There can be situations where master database is not required in determining which database to connect when application get this information from some other sources like from a cache or from configurations or from request claims and etc.

Azure Elastic Pool has a tool known as Shared Map Manager (which is the master database), this Shared Map Manager works along with the Elastic Client Library. Elastic Client Library has a good support with the Entity Framework.

When it comes to EF Core, the support of the Elastic Client Library seems not actively available, and EF Core has its own differences compared to Entity Framework as well. This blog post addresses these issues.

Architecture Pattern for using Elastic Database Pool with EF Core

Assume we have a multiple tenants with different dedicated databases and they are configured in a pool. Also we have the Elastic Jobs configured in the subscription. (A subscription can have only one Elastic Jobs interface and multiple pools can use the same interface) Read more about Elastic Jobs and how to configure them

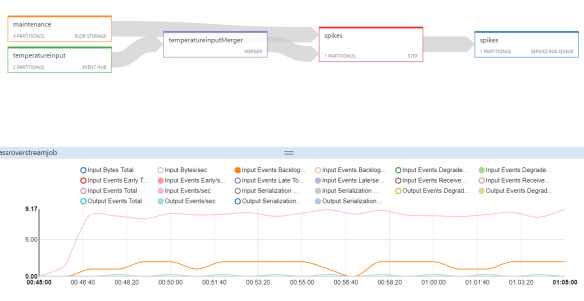

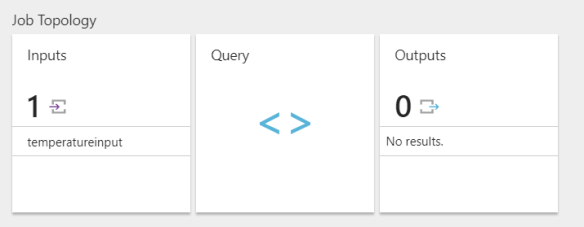

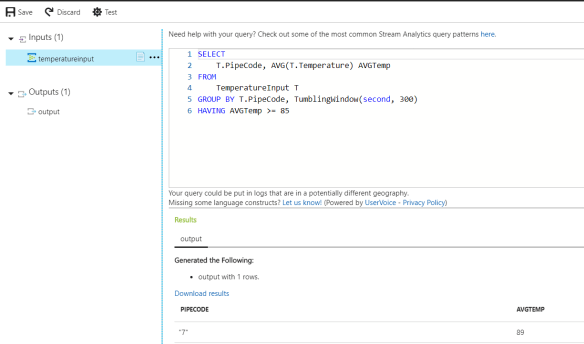

The below figure shows the architecture model for the Azure Elastic Pool development.

The below points summarize the idea.

- Developer generates the code first migrations from his IDE (typically this is VS PMC) pointing to the Developer Db.

- Developer Db can be any valid SQL instance – either an Azure SQL instance or a in the developer machine.

- EF Core DbContext parameter-less constructor should point to the Developer Db.

- In the pool apart from the tenant databases, create a database which holds only the schema – this database is known as Zero Db

- Zero Db will be used to generate the delta of the schema. Developers can use VS Database Project to generate the TSQL of delta.

- The generated delta script will be executed using the Elastic Jobs interface either by any sort of automation or using the Azure portal itself.

Developers can generate the scripts without having the Zero database, using the EF Core commands, but I highly recommend to have a schema only database in the pool, because of the following reasons.

- Zero Db can be used to generate the delta TSQL

- At any given point we have the schema only version of the production schema

- Creating a new tenant database is easy at any point as we can simply copy the Zero Db

Implementation

In order to operate an enterprise scale solution, addition to the working code the entire flow of the development and deployment should be in place.

In order to make the developer migrations work as seamless with the existing VS PMC based experience and also to trigger the right database connection for the right tenant, we need an implementation of DbContext in the following way.

public class MassRoverContext : DbContext

{

public MassRoverContext()

{

}

public MassRoverContext(DbContextOptionsBuilder optionsBuilder)

{

base.OnConfiguring(optionsBuilder);

}

protected override void OnConfiguring(DbContextOptionsBuilder optionsBuilder)

{

{

if (optionsBuilder.IsConfigured == false)

{

optionsBuilder.UseSqlServer("");

}

base.OnConfiguring(optionsBuilder);

}

}

public DbSet RoverEquipment { get; set; }

}

The parameter-less constructor of the DbContext will be used in the migrations.

The constructor takes a DbContextOptionsBuilder<MassRoverContext> will be used in the applications which points to the right database determined by some logic in the application.

The below code snippet shows a common service which determines the correct DbContext for the application in ASP.NET Core

public class ContextService : IContextService

{

private readonly HttpContext _httpContext;

private readonly ConnectionSettings _connectionSettings;

public ContextService(IHttpContextAccessor httpContentAccessor, IOptions settings)

{

_httpContext = httpContentAccessor.HttpContext;

_connectionSettings = settings.Value;

}

public MassRoverContext MassRoverContext

{

get

{

var sqlConnectionBuilder = new SqlConnectionStringBuilder(_connectionSettings.ZeroDbConnectionString);

// determine the mapping based on some value. In this sample the database mapping is determined by a header value tenantid

sqlConnectionBuilder.Remove("Database");

sqlConnectionBuilder.Add("Database", _httpContext.Request.Headers["tenantid"].ToString());

var dbOptionsBuidler = new DbContextOptionsBuilder();

dbOptionsBuidler.UseSqlServer(sqlConnectionBuilder.ConnectionString);

return new MassRoverContext(dbOptionsBuidler);

}

}

}

Notes:

The shared index data source which holds the mapping of the tenant and the database is not shown in the above figure, since this shared index can be anything. In our example for the simplicity, assume the tenant id is in the request claim and this claim holds the name of the database. This SHOULD NOT BE USED IN PRODUCTION

The registration of the services is not included in this post, since posting the entire flow of how EF Core project and ASP.NET Core project connects is outside the scope of this article. But the full code is available in this repo.

Continuous Deployment & Automation

In this section let’s discover how the continuous deployment can be performed. We have the delta TSQL generated either by comparing the schema between the Developer Db and Zero Db or by any other mechanism.

Now we should prepare an automation script or a running code to submit the delta TSQL to the Elastic Jobs interface. Elastic Job will run the job across the databases in the pool.

I created a simple console application which submits the task to the Elastic Jobs interface. Access the code from this repo

The Elastic Jobs SDK is still in preview of the time of this writing and I will be conintously updating this cosole application and will post a seperate blog on how to plug this with VSTS Build and Release Definitions.