NoSQL technologies provide solutions for issues that relational databases cannot provide. At the same time, designing an application on top of a NoSQL technology requires specific technology dependent design decisions and architecture.

This post addresses the issue and explains how to model a real world problem using Azure Table Storage. This is neither an introduction to Azure Table storage nor a code sample, but this post provides the thinking behind designing applications on Azure Table Storage.

Designing in Azure Table Storage

Azure Table Storage is a column store NoSQL data store, it has 4 types of querying practices.

- Point Query – Query based on Partition Key and Row Key, retrieves single entity.

- Range Query – Query based on Partition Key and range of Row Keys, retrieves multiple entities.

- Partition Scan – Partition Key is used but Row Key is not known / not used in the in the query, other non-key fields might be used.

- Table Scan – Partition Key is not used in the query, other key fields might be used.

Scenario

Think something similar to StackOverflow or MSDN forum. (Be mindful that developing a forum in that scale requires lot more technologies and strategies other than NoSQL). But as a scenario let’s assume we’re going to build a small scale forum with the following features.

- Forum members can post questions under categories.

- Forum members can reply to posts.

- Users have points based on their forum contribution.

Design

In modeling our application in the Azure Table Storage, we need to identify the tables first. Users, Posts, Replies and Categories are the main tables.

Categories table can have single partition or may be two partitions – Active and Archived.

Row Key has been used to store the category name, in the entity class CategoryName has IgnoreProperty attribute, which makes it virtual and there will not be a physical column called CategoryName in the table. Since category name is the Row Key under a partition there won’t be duplicates in category names within the partition.

Keep the fixed Partition Keys as enums, this avoids mistakes (mostly typing mistakes in dealing with strings) in defining Partition Keys.

A simple query (partition scan) to retrieve all Active categories.

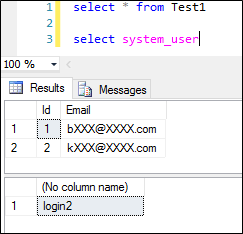

Users table has a special design, email address and password are used as credentials. So email address should be unique across the entire Users table regardless of the Partition Key – Row Key combination. So are we going to design the Users table in a single partition with email being the Row Key ?

This is possible but it is not a good design practice, dumping millions of user records under single partition.

The strategy is simple bucketing, I define 6 partitions for the Users table with Partition Key simply being a single number, like 1 to 6. And allocate email addresses based on their first letter.

Consider, that any email address starting from ‘a’ to ‘d’ go to partition 1, email addresses starting from ‘e’ to ‘h’ go to partition 2 like shown in the table below. This achieves both the uniqueness of the email address across the table and gives the partition scalability.

A simple method like below would decide the Partition Key.

Posts table would be a straight forward design with Partition Key being the category name and PostId (GUID) would be the Row Key. Posts of each category live in a separate parition.

Like the Category entity, Post entity class will link Partition Key and Row Key using two properties CategoryName and PostId respectively marked with the IgnoreProperty attribute. See the code snippet given below.

If you think, using category names as Partition Keys would outgrow the rows in a single partition since one category can have hundreds of thousands of rows, you can concatenate the category name along with the year and create partitions like Azure-2015, Azure-2016 or use any other possible variable.

But the point is, making sure that you can calculate the Partition Keys from a formula gives you the ability to limit your queries maximum to Partition Scans.

In this scenario, Replies table can take two highly possible designs.

First, there is no separate table for Replies, use the Posts table with an additional column called ParentId. Posts will have an empty ParentId and replies will have values for ParentId of the post they are made to. Replies also go to the same partition as Posts.

Second design is having a separate table for Replies – I would personally go for this design as we can have more detailed information specific to replies.

Partition Key would be the category name and Row Key would be the Reply ID. PostId would be another column. So in order to find the replies of a Post we would trigger a Partition Scan.

Conclusion

Designing an application on top of any NoSQL technology requires specific planning and architecture based on the domain and the selected NoSQL platform. The knowledge of the underlying NoSQL technology is very essential in order to make the design efficient.

For example, in the above design if we get a requirement to show the recent 20 posts in the home page, regardless of the category, this would definitely trigger a Table Scan and also we have to bring all the posts and sort it based on the TimeStamp property.

So a good decision would be having another temporary table to keep the top 20 posts, when a new post is added the Id of the post will be updated in that table and removing the last old one. We can use write behind strategies in the application to do this.

So make sure that you design the application for the technology in a correct and efficient way.

The biggest misleading point I always here in the industry is, NoSQL development is easy and takes less time. Those two arguments are subjective and also you need to compare it with some other technology, commonly they do the comparison with relational database technologies. But in my experience I don’t see any significant time savings in using a NoSQL technology. But there are other benefits for sure.